AI BITS: LIVE.DIE.REPEAT., POST-PHOTOGRAPHY, FUTURISTIC mix-and-match & Night Springs music video.

BIT #1

11 seconds through a life cycle, can you get any faster than that?! AI-generated motion video entitled LIVE.DIE.REPEAT. by Dreaming Tulpa is like a ride on a roller coaster, but we’re going fast forward from birth to death instead of up and down. The dynamics here make your mind go nuts. That’s one!

‘As humans, we comprehend time as a straight line moving in only one direction: forward, incapable of pausing or reversing. Every moment we’ve lived through, every choice we’ve made, and all the decisions we are yet to make, are forever etched onto this infinite one-way street we call time. If you believed you were destined to revisit not only your most thrilling years but also your deepest sorrows and most painful memories, over and over again, what would you change? Would you continue to cling to your darkest fears, or would you relinquish them, liberating yourself from the ceaseless cycle? LIVE.DIE.REPEAT.’

Second, the even more interesting thing about the short is the workflow behind it. Do you want to see what’s in the kitchen? Make sure to visit the author’s original thread here. Incredible and very well-thought-through work!

STEP 1:

‘At the center of LIVE.DIE.REPEAT. is the endless, always forward moving loop. I knew I wanted to visualize an entire lifetime within the 12s limitation we were given. I had the visuals for the first, second and last shot in my head, so I asked GPT to help me come up with ideas for the rest. I tweaked the suggestions to my liking and the outline of the story unfolding was born.’

Dreaming Tulpa experiments with visuals, transitions, green screening, and whatnot! Goes back and forth to using a variety of tools for creation and putting all the scenes together. You should not miss it. ‘Interdisciplinary’ gets a new meaning once you see it. The process below is just one of the steps on the way. It’s nuts.

‘So I wondered how ZeroScope would handle an init video that zooms in on a still image. I went into Midjourney, generated some visuals for a few different scenes, outpainted them when necessary and asked OpenAI’s new code interpreter to generate zoom in videos of the images which I then fed into ZeroScope. And the results, while initially still very creepy, blew me away. I found a workflow that was able to visualize my vision.’

source: Twitter

BIT #2

Are you familiar with the term post-photography? Let us tell you that it has been here for a while now. Nothin new, but in our humble opinion, and some of the generative AI adopters/ artist it perfectly describes the new transitional reality we are living. We are constantly being challenged by our conventional perceptions of what images should look like and represent. And post photography is in fact a process of creation.

‘Post-photography is not a style or a historical movement but a rerouting of visual culture…it defines a new relationship we’ve adopted with our images.’

– Joan Fontcuberta*

Isn’t it very much true also for the AI generated photography? Let’s see!

artist: @0xStreets / source: Twitter

artist: @andrea_ciulu / source: Twitter

artist: @stas_kulesh / source: Twitter

artist: @KaliYuga_ai / source: Twitter

artist: @fellowshiptrust / source: Twitter

No camera, just photography.

BIT #3

Here’s one from one of our favorites; Alie Jules (@saana_ai), a handy list of futuristic words for Midjourney. What for? Well, you see it for yourself, just mix-and-match…

Artificial consciousness

Asteroid mining

Biohacking

Bioluminescence

Cybernetic organism

Dark energy

Dark matter

Digital twin

Drone swarm

Dyson sphere

Exoskeleton

Exoplanet

Fusion power

Galactic civilization

Genome

Gravity manipulation

Higgs Boson

Holography

Hyperautomation

Hyperloop

Hyperspace

Multiverse

Nanotechnology

Necrobotics

Neuralink

Neuromorphic engineering

Post-scarcity economy

Quantum

Quantum cryptography

Quantum entanglement

Quantum foam

Quantum teleportation

Quasar

Solar sail

Space elevator

Superintelligence

Synthetic

Synthetic intelligence

Tachyons

Terraforming

Time travel

Zero-point energy

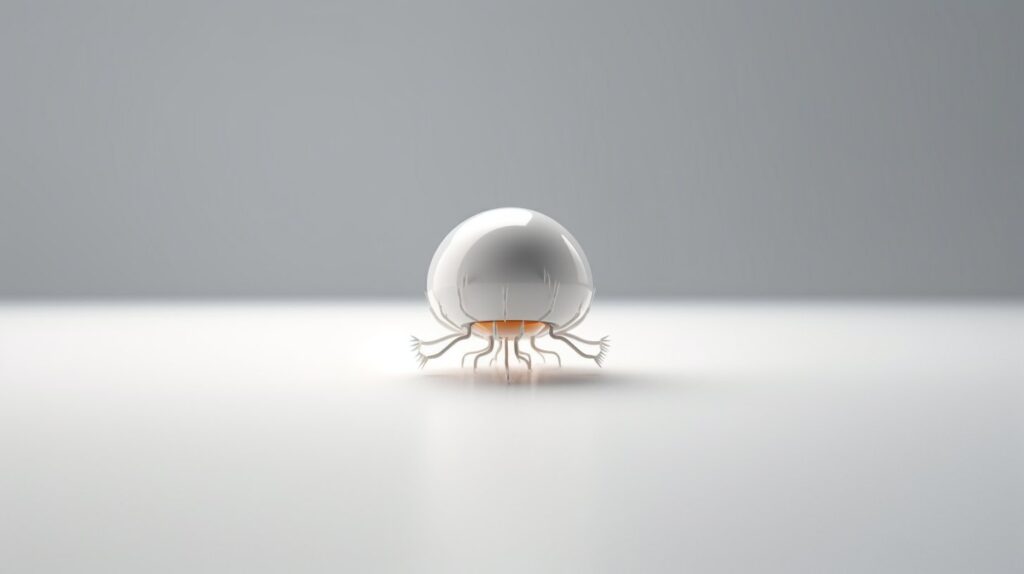

artist: @saana_ai / prompt: neuralink, macro photography, white, minimalist –ar 16:9 / source: Twitter

NSD (@NSD_ai) went all in by throwing all of the words in a raw prompt. This is what came out!

artist: @NSD_ai / source: Twitter

Visit the great thread here.

BIT #4

We just simply can’t get enough of the newness in the generative motion AI. We have like literally jumped into the rabbit hole with many creators, but Steve Mills (@SteveMills) has a very special place in there. He has just produced a full-scale music video, with every shot text to video with Gen-2 and a storyline entitled ‘Night Springs’ for a band called Assembler 2084.

‘As well as showcasing this awesome song, the aim was to tell a longer narrative with coherent setting and characters. Video footage generated entirely via text prompts with @RunwayML

Editing in Resolve’

artist: @SteveMills / toolkit: RunwayML, Resolve / source: Twitter

You can watch the full version in HD on Youtube here. Wow!